Architecture

How CopilotKit's pieces fit together — the three-layer stack (frontend, runtime, agent) and the AG-UI protocol that bridges them.

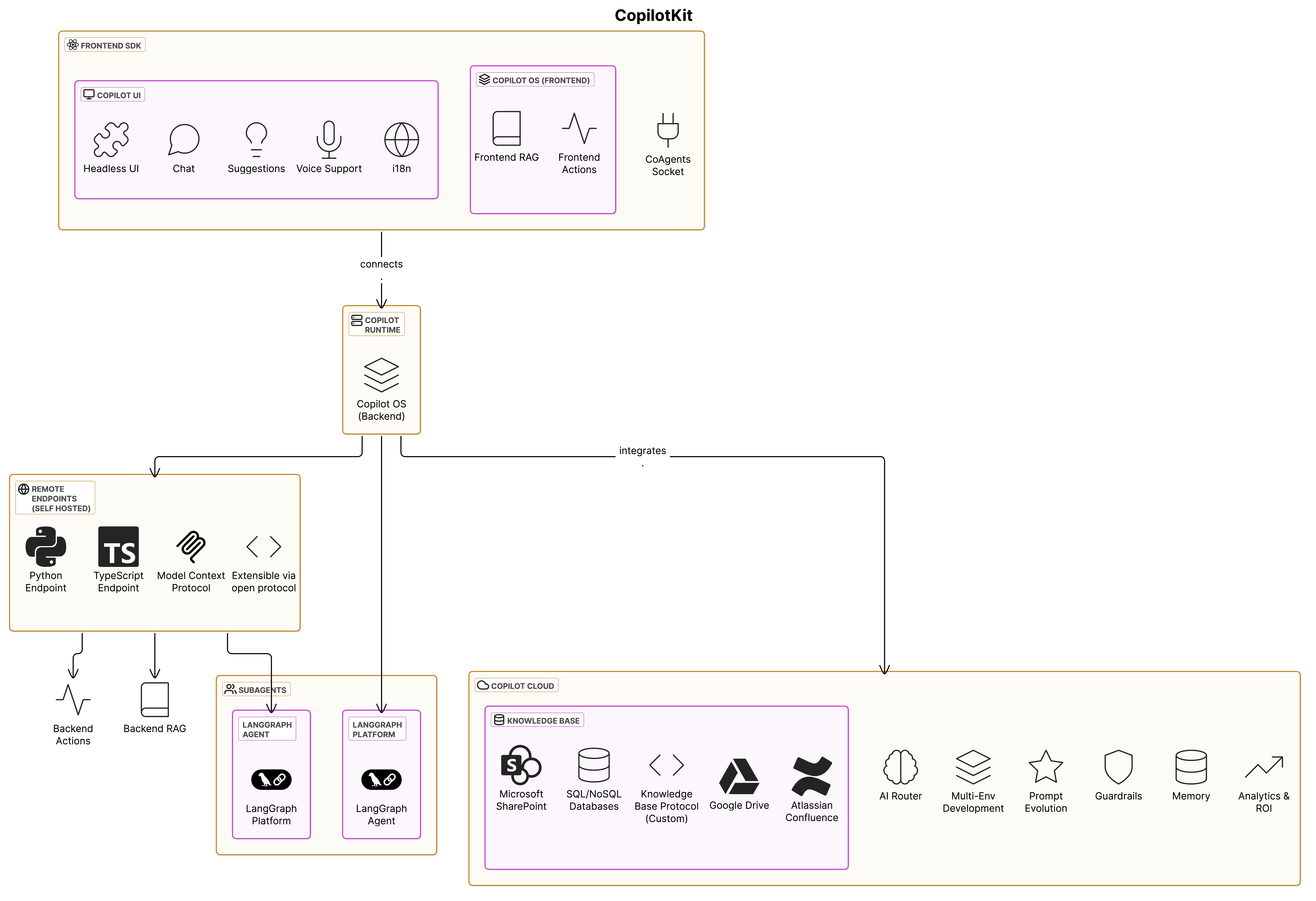

CopilotKit is a three-layer stack that connects your React frontend to any agent framework over a single open protocol. Understanding the layers — and the wire between them — is the fastest way to build the right mental model before touching code.

The three layers#

1. Frontend#

The React app your users interact with. CopilotKit ships hooks (useAgent, useFrontendTool, useAgentContext, useThreads) and prebuilt components (CopilotChat, CopilotSidebar, CopilotPopup) that connect your UI to a running agent. Use the prebuilt chat surface, build a fully custom UI with the headless hooks, or mix the two.

2. Runtime#

A request handler that mounts inside your application server (Next.js App Router, Express, Hono, Bun, Deno, Cloudflare Workers). The runtime accepts requests from the frontend, mediates auth and tool calls, and forwards work to your agent over AG-UI. For the framework-agnostic path you can instantiate a BuiltInAgent in-process and skip an external agent process entirely.

3. Agent#

The agent backend you choose: LangGraph, Mastra, CrewAI, Pydantic AI, Microsoft Agent Framework, the Built-in Agent, or any custom AG-UI-compatible implementation. The agent runs your prompt, calls tools, emits state, and streams events back to the runtime.

AG-UI: the protocol bridge#

CopilotKit doesn't lock you into one agent framework. The runtime talks to your agent over AG-UI, an open, event-driven protocol that standardizes how agents communicate with applications:

- Event-driven — agents emit any of 16 standardized event types as they execute (text deltas, tool calls, state snapshots, state deltas, run lifecycle), creating a stream the runtime forwards to the frontend.

- Bidirectional — users send input, agents respond, agents can pause for human-in-the-loop input, frontends can call frontend tools that the agent invokes.

- Transport-agnostic — SSE, WebSockets, webhooks, or whatever your stack prefers. AG-UI doesn't care how the bytes move.

- Framework-agnostic — every supported integration ships a thin AG-UI adapter. Switch backends by changing one line of runtime configuration.

"The future of agents isn't one company or one platform — it's an agentic ecosystem connected by protocols."

A protocol-based architecture means you can swap the agent layer without rewriting the frontend, run multiple agent backends side by side, and integrate with any AG-UI-compatible tool from the broader ecosystem — MCP servers, A2UI components, Oracle / Google / AWS agent platforms.

Request flow at a glance#

- User sends a message in your React app.

- Frontend

useAgenthook posts to your runtime endpoint. - Runtime opens an AG-UI session with the configured agent.

- Agent emits text, tool calls, and state updates as AG-UI events.

- Runtime streams the events back; the frontend renders them in real time.

- If the agent calls a frontend tool, the runtime relays the request, your hook handler runs in the browser, and the result flows back to the agent.

- Threads, persistence, and realtime sync (when configured) are mediated by the Enterprise Intelligence Platform — the Enterprise backend that sits beside the runtime.

Where to go next#

- Practical setup — Quickstart walks through wiring all three layers in ~10 minutes against the Built-in Agent.

- Protocol depth — AG-UI documentation covers every event type, transport option, and middleware hook.

- Backend choices — Agents & Backends explains the runtime, custom agents, and the trade-offs between Built-in, external frameworks, and bring-your-own.

- Enterprise deployment — Enterprise Intelligence Platform covers Threads, Persistence, Observability, and the hosted-vs-self-hosted decision.